I’ve been thinking a lot about how to evaluate MCMC samplers. A common way to do this is to run one or more iterations of your contender against a baseline of something simple, something well understood, or more rarely, the current champion (which seems to remain NUTS, though we’re open to suggestions for alternatives).

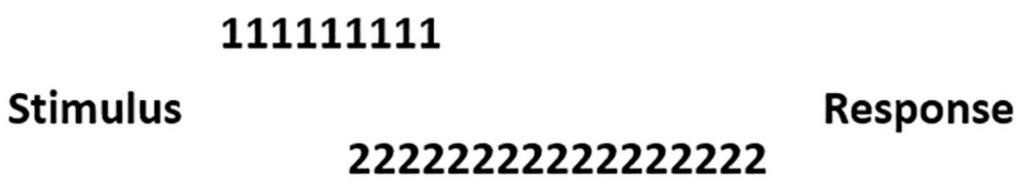

Reporting comparisons of averages (and uncertainty)

Then, what do you report? What I usually see is a report of averages over runs, such as average effective sample size per gradient eval. Sometimes I’ll see medians, but I like averages better here as it’s a fairer indication of expected time over multiple runs. What I don’t often see is estimates of uncertainty in the estimate of the average (std error) or across runs (std deviation). I can’t remember ever seeing someone evaluate something like the probability that one sampler is better than another for a problem, though you do occasionally see those “significance” asterisks (or at least you used to) in ML or search evaluations.

NUTS ESS has high variance

The range of errors we get from running NUTS on even mildly difficult problems implies variations of effective sample size of an order of magnitude. This variance is a huge problem for evaluation. If you follow Andrew Gelman’s advice, then you will run multiple chains and wait for them all to finish. That makes the run time an order statistic which is going to depend strongly on the cross-chain variance.

Time-bound runs?

In the past, Andrew asked about running for a fixed time rather than a fixed number of iterations, but we were worried about inducing bias. Chains tend to slow down in speed when they adapt to a too-small step size or when they wander around big flat regions for too long. It’d be nice if we could fulfill Andrew’s request to figure out how to combine timed runs in a way that doesn’t introduce bias. Suggestions more than welcome! If you run 1024 chains in the style of Matt Hoffman and Charles Margossian, then the order statistics for timing become very sensitive to tails.

Within-class versus across-class variation

This is a classic statistical comparison problem. In the sampler I’m evaluating now that is inspiring me to rethink evals, the within-group variance of NUTS and the within-group variance of the contender is greater than the difference between the mean time of NUTS and the mean time of the contender.

Distributions of ratios?

So I’m thinking that rather comparing ratios of average run times, we should look at histograms of ratios of draws from NUTS’s statistics and draws for the contender’s statistics. This will give us a much wider range of values, which for some of which will have NUTS taking twice as long and for some of them will have the contender taking twice as long. Luckily, it shouldn’t be as bad as a ratio of two normals, as we don’t expect values near zero in the denominator giving us massive values like in generating Cauchy variates (as the ratio of two normals). Averaging ratios doesn’t make a lot of sense because it’s sensitive to direction, but averaging log odds might make sense, because it’s symmetric around even odds.

Just show everything

We don’t need to try to reduce things to a single statistic. I often argued when I was in NLP that I’d rather see a confusion matrix than an accuracy (sensitivity, F-measure, etc.) and I’d rather see the ROC curve than just get the single value for AUC. These reductions to single statistics are very lossy and a single number is often a poor summary of a complex multivariate situation. And in NLP, a client often has a specific operating point in mind (high sensitivity, high specificity, etc.).

RMSE of standardized parameters

What I’m currently doing is showing the boxplots of root mean square error for standardized parameters. Standardizing is a trick I learned from Andrew that brings everyone’s scales into alignment. That is, standard error is just standard deviation divided by the square root of effective sample size. Scaling the parameters normalizes all parameters to have a standard deviation of 1, so they’ll be weighted equally when comparing results.

Why not minimum ESS?

The problem with minimum ESS is that it’s an extreme order statistic in high dimensional models, and is thus going to be difficult to estimate. It also ignores the errors on all the other parameters.

Direct standard error calculation

If you know the true value and can perform multiple estimation runs, you can calculate standard error empirically as the standard deviation of the errors. Given the standard error, we can back out expected ESS from the relation that standard error is standard deviation divided by the square root of ESS. I learned that technique from Aki Vehtari, who used it to calibrate our new ESS estimators that discount for approximate convergence (actually a few years old as of now).

Something multivariate?

Maybe I should be thinking of something that’s more multivariate, like the multivariate ESS from Vats, Flegal, and Jones? I’m afraid that would introduce a scaling problem in dimensionality.